What this work is really about

There are at least four different questions inside the phrase AI in medical education. The first is informational: can AI help learners find, summarize, and interrogate the right knowledge? The second is pedagogic: does it improve learning, or merely make tasks feel easier? The third is assessment-related: can it generate better signals about competence than episodic exams and occasional supervision? The fourth is professional: what happens when trainees are no longer judged only by what they execute, but by how they use, supervise, and challenge machine-generated work?

Our view is that the interesting work is not just asking whether AI is useful. It is determining where AI should be used aggressively, where it should be constrained, and where educationally valuable friction still needs to be protected.

How Grover Lab approaches the field

Our approach is less about isolated tools and more about educational infrastructure. We are interested in source-grounded systems, not black boxes; in deliberate practice, not one-off novelty; in continuous performance data, not only retrospective judgment; and in faculty development, because no AI-enabled curriculum will work if the educators themselves cannot model good use.

This is why the current build layer matters. SharpenEDU is the clearest example of that direction. It links curriculum intelligence, repeated learner practice, and multimodal performance assessment into one loop. In practical terms, that means a school can move closer to an environment where curated curricular sources drive explanations, learner practice becomes abundant rather than scarce, and feedback is generated from actual performance rather than only from occasional faculty impressions.

The intellectual logic for that work is visible in Samir Grover’s essays on retrieval-augmented generation in medical education, on curriculum as infrastructure, and on the changing role of the physician in an agentic era in The AI agent-augmented physician.

Current lines of inquiry

Agents and supervision. As multi-step systems become capable of execution, medical education has to decide what remains a protected rep for the learner and what can be delegated. That argument is developed more explicitly in Agentic AI in medical education, part 2, and I became a good doctor by doing it badly first.

Source-grounded curriculum systems. We are skeptical of generic AI outputs untethered from approved curricular material. Our interest is in systems that sit on top of curated sources, create traceable drafts, and make curricular change easier to operationalize. That is one reason SharpenEDU matters: it treats the curriculum as a living substrate rather than a static PDF archive.

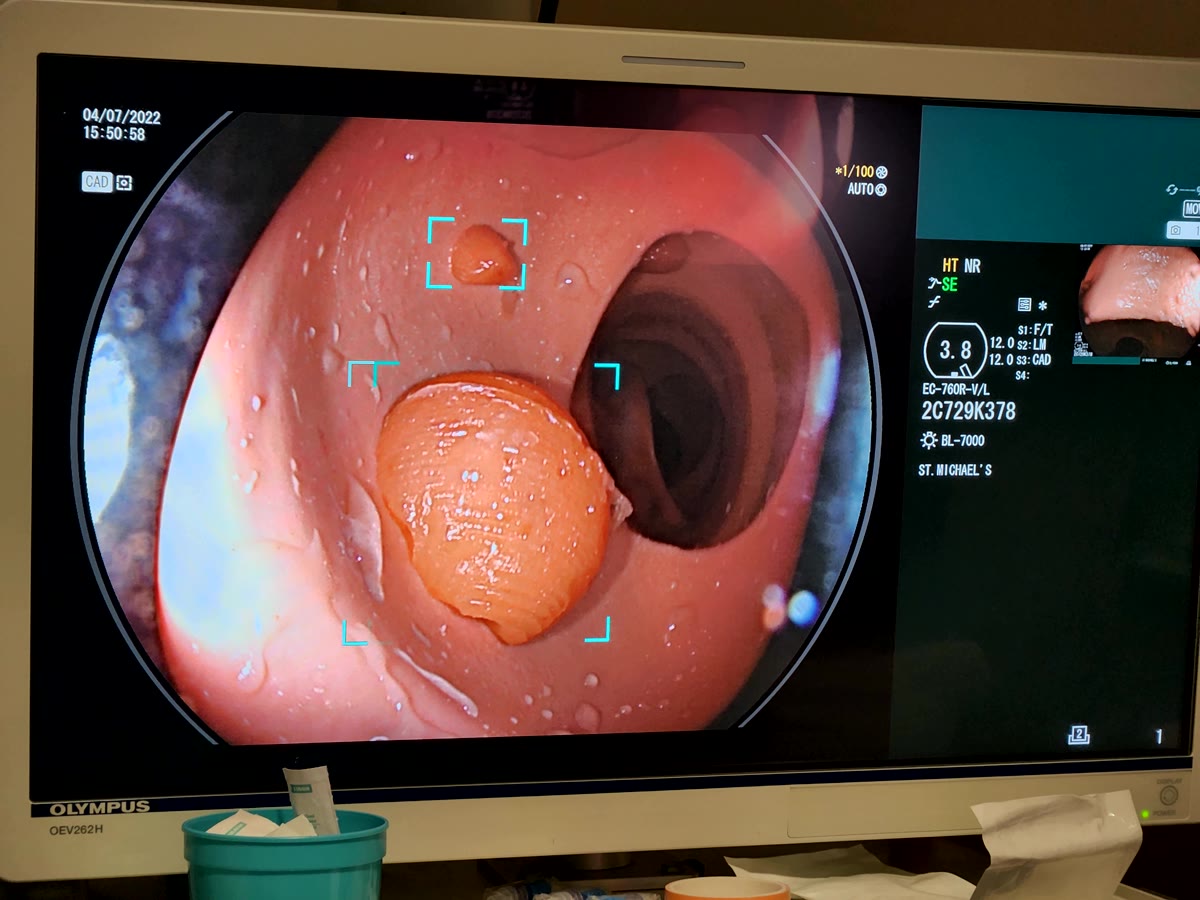

Assessment and feedback. AI becomes more educationally meaningful when it produces better signals about actual performance. This includes natural language processing, video-based review, structured feedback, and the possibility of more ambient assessment models. It also includes the hard question of calibration: how to make learners and clinicians better at judging both themselves and the machine.

Faculty development. AI-literate learners need AI-literate supervisors. The bottleneck is not only student skill. It is whether educators know when to use these systems, how to question them, and how to redesign teaching around them. That is part of the argument in Who trains the trainers?.

Selected Grover Lab work in this area

Related essays and build work

SharpenEDU

Current educational infrastructure work spanning curriculum intelligence, deliberate practice, and multimodal performance assessment.